I. Descriptive to Predictive to Prescriptive Analytics and Beyond

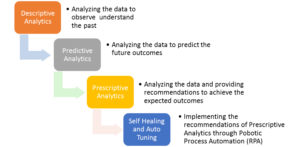

Analytics in layman’s term is logical analysis and modelling is a process of creating hypothetical inferences. Descriptive Analytics is nothing but the analytics performed on the collected data to understand what has happened, why an event might have occurred or who could be responsible for the event etc. It give us some insight in to the past. [1]

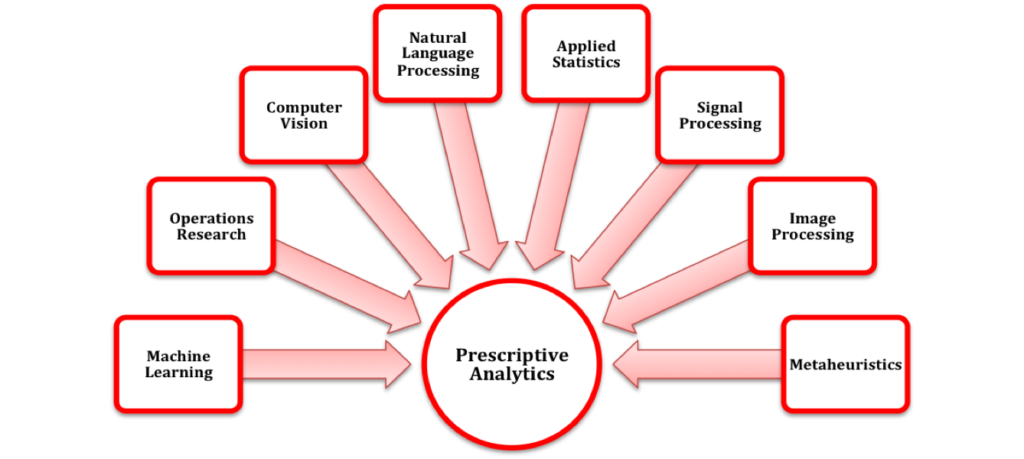

Using the analytics to extrapolate and predict the future events is termed as Predictive Analytics. The next step in analytics, is the process of an analyzing the data and providing recommendations to achieve a specific target if the target deviates from the predicted future. This analytics is called Prescriptive Analytics. We now take it a step further and suggest the automated implementation of the prescribed recommendations and we have termed it self-healing or auto tuning. The applications of self-healing are abundant. But here we examine a specific use case for server responsiveness against future workloads.

For example, let us assume there is a certain user load “X1” which acts on a system with “Y1” configurations and has been proven to adhere to some Service Level Agreements (SLAs), “Z1”. Now, predictive analytics could tell us what our user load in the future would be, say “X2”. Predictive analytics could also say, whether or not, our current configurations “Y1” would still hold good for user load “X2” and adhere to same SLAs “Z1”. But if the predictions state that, for the future load “X2”, with current configurations of “Y1”, SLA “Z1” cannot be achieved, but there would be a deviation. So the new achievable SLAs are “Z2”. Now, if we the SLAs “Z1” needs to be achieved for user load “X2”, prescriptive analytics would recommend a new set of configurations, “Y2”, which would achieve it. Now, the process of self-healing is where system configurations or architectures automatically reconfigures or tunes itself to accommodate the prescribed changes as “Y2” to achieve SLAs “Z1”.

Note: This is only one example. There could be umpteen number of use cases where self-healing could be implemented

II. Self-Healing Process Elaborated

We have classified the self-healing process in to 7 logical/generic steps (although each step would have multiple sub-steps to complete before proceeding to the subsequent step). Listed below are the various steps in the process of Self-Healing or Auto Tuning

A. Defining Objective

Deep knowledge about domain is required to set objectives. In our use case of self-healing architectures, it is essential the person has an in depth understanding of the system architecture, its configurations, capabilities and limitations.

B. Gather Analyze and Preparing Data

The steps begins with gathering data and assessing if the data is sufficient. We then analyze and understand if any additional data is required or if there are any limitations. If there are outliers or improper data, we might have to clean it (removing data that are inaccurate, duplicate, outdated etc.) or transform it to the format required for analysis.

C. Create and Deploy Models

Here we choose from the various algorithms such as linear, regression, decision trees, clustering etc. that are readily available and create models to the prescriptive process.

D. Execute Models against Data

Here we run models against the available data set. This processes the data and throws out results which needs to be analyzed for success or failure.

E. Monitoring and analyzing

Here we monitor the results and check if the objectives are satisfied. This determines if the models are accurate and produces the desired results.

F. Recommendations and Corrections

The final step of a prescriptive model is that, we get the “prescription” or “recommendation” on what we need to do achieve our goals.

G. Self-Healing and Auto Tunings

Taking a step beyond Prescriptive Analytics, is the implementation of the recommendations. In this, using bots, we may automate the re-deployment and reconfiguration of our system architectures, as per the prescribed recommendations.

Note: Bots have already penetrated various fields of research, commerce, insurance, health care etc. In the software industry, many bots have already been implemented in deploying applications or code. With true agility and DevOps taking precedence in recent times, quickly re-configuring and deploying infrastructure has already become a reality. Putting these two together, re-configuring and re-deploying infrastructure based on the tuning recommendations from our prescriptive analytics models is not just possible but easily attainable.

III. Why Even Do it?

Prescriptive Analytics provides ample opportunities for easily achieving targets with details action items on what needs to be done to achieve them. The use cases are abundant. Be it, improving customer services, increasing profit margins, enhance employee performance, improve profitability, reducing risks, resource optimizations etc. But considerable efforts still needs to be spent on implementing the prescriptions/recommendations. Automate the process and we ensure guaranteed target achievability every time.

In our specific use case, with digital disruption playing a major role in most business across the globe, ensuring best in class digital customer experience is the need of the hour to remain relevant in today’s business.

So moving beyond traditional performance testing methodologies, which only validates if the current infrastructure can support our anticipated target user load, we now recommend and implement, the ideal resource requirements along with optimal configuration settings and tuning recommendations for providing the best user experience for our customers, even before an untoward incident happens.

IV. How does it differ from Containers/Cloud Based Auto Scaling?

To understand how Self-Healing/ Auto-tuning differs overcomes the issues cloud vendor supplied auto scaling options, here we chalk out some of the common misconceptions the surround auto scaling. This will then be compared against our approach to determine its efficiency.

A. Auto Scaling is Easy

Creating auto scaling architectures requires a considerable amount of time. While setting up a load balancing architecture group is straight forward with the predefined tools and utilities from the vendor, creating instance with minimal start up times and with ideal configurations requires customized scripts, which are tedious.

B. Auto Scaling is Load Based

While CPU load is a commonly used trigger, auto scaling goes beyond just load scaling. Auto scaling, apart from looking at just the CPU load can also monitor various other triggers like queue length but it can only spawn new server instances when a threshold limit on the queue is reached. But this again is a trigger based process and if by chance there are any network or connection issues at that time (however rare they may be) will result in significant time/cost/performance implications.

C. Capacity and Demand Match Each Other

If capacity and demand match each other, provisioning resources would be very easy. But this is never the case. Due to unforeseen incoming traffic, if the scalable limit set is insufficient to meet the sudden spike in demand, the application may crash or at the best case will most definitely slow down.

D. Ideal base Images are Quick to Configure

Auto scaling is quicker if the base image is light I.e. it is comparatively more time consuming to deploy a large sized, fully functional base image, with all the necessary configurations than spinning up resources with stock images and then build on top of the vanilla image using configuration management tools. But even then, there is a considerable time (several minutes) that is lost in building atop a vanilla image. This could prove fatal if the system is already under heaving load and is looking to quickly scale up to cater to the increasing needs.

Now with Self-Healing and Auto-Tuning, we use predictive analytics to first identify future demands and needs. With prescriptive analytics, we find out the exact amount of resourcing that is required to cater to the predicted needs. Instead of building fixed sized instances with preset configurations such as threads, queues etc, we can make educated calculations to arrive at ideal configurations that perform best under predicted circumstances and automatically provision the necessary resources even before any untoward event occurs. This way, we neither loose on performance nor time in set up or configurations, after a critical threshold is reached. Therefore we have a fully functional, perfectly configured system, best suited to meet the demands at the crucial time of need.

Image source: Wikipedia

V. Challenges and Focus Points

As with any ideas, there are some challenges or hurdles with our approach. Some of them are:

- Prescriptive analytics is fairly nascent, so lack of skills or finding the right models and building on it, could prove a difficult challenge that needs to be overcome.

- Quality of data determines the quality of prescription. Insufficient or inaccurate data will produce inaccurate recommendations.

- Cost of acquiring experts in the field of data analytics is a tradeoff.

- Thorough analysis of the tools for performing analytics needs to be performed, to validate if the algorithms and procedures, support the required analysis before purchasing software for analytics. (Freeware are of course available.)

- Lack of understanding of business future needs or lack of critical thinking.

- Over reliance on the prescriptive Results, especially in this stage of implementation where this is fairly new – could have a detrimental effect if the models are incorrect or inaccurate

VI. Your Future is how you Design it to be!

Prescriptive analytics is already here. It is only a matter of time before Self-Healing becomes practice. With respect to our specific use case, we can forget worrying about applications will survive the Black Friday Sale or if a celebrity’s tweet has broken the internet. With Self-Healing/ auto tuning, our applications would have predicted and accordingly protected and optimized itself from crashing or performing poorly and ensuring optimal performance even before any untoward incidents happen. With prescriptive analytics, the ideal configuration for extracting the best performance from the system can be set for making the application perfectly elastic. This is just one use case. The applications and possibilities are infinite.

VII: References

- Halo Business Intelligence. (2016). Descriptive, Predictive, and Prescriptive Analytics Explained. Retrieved from halobi.com: https://halobi.com/blog/descriptive-predictive-and-prescriptive-analytics-explained/