With the advancement in technology, ubiquitous computing and omnipresence of the internet go hand in hand in today’s society. They have radically impacted our standard of living so much, that they have become indispensable to our lives.

One such technology is ‘Augmented Reality‘. What really is this? It is a system that generates a composite view for the user combining real scene as viewed by him and a virtual scene generated by the computer that augments the real scene with additional information. To further clarify, it is not the same as virtual reality, wherein reality is replaced as a whole and the end user has a very miniscule role to play. In simple terms, Augmented reality enhances the quality of real world information, perceived by a human, whereas virtual reality replaces the essence of reality. The role of augmented reality has grown so that augmented reality testing will soon be commonplace.

Today, we see many applications that support augmented reality, giving the user real time updates in combination with exciting animations; for example, Layar, Wikitude, Sky Map, Zappar are all available for Android and iOS. Besides individual apps, organizations including technology giants are working on gaining a foothold in the augmented reality world. For example, head mounted displays (HMDs) such as Google glass,  Meta Glasses and Oculus Rift

Meta Glasses and Oculus Rift , are now publicly available.

, are now publicly available.

These apps leverage a mobile device’s inbuilt camera, GPS, compass, accelerometer, gyroscope, and other sensors to give back a real time interactive experience to the end user. Currently, all these apps and HMDs are primarily used for advertisement, navigation, social activities and gaming.

As a software tester, I can envision how augmented reality would soon interlace with the testing discipline. And when that happens, we will need to be ready to take on testing these complex systems within well-defined time slots.

As we prepare for this shift, let’s see what some radical changes are, in how we perform our testing activities.

Even before we look at how to test augmented reality systems, let’s see how they will benefit the testing function. Can we, for instance, use these systems to bring down our manual test efforts?

Wearing a Tester’s Hat

This is where my role comes in. I have been fascinated about Augmented reality ever since I knew about Pranav Mistry’s (the MIT-ian) Sixth Sense Technology  . After playing around with these apps for sometime, I thought why not leverage these to test a given application.

. After playing around with these apps for sometime, I thought why not leverage these to test a given application.

The augmented reality system would specifically assist us in providing on demand results.

Co-incidentally, I found a definition for Augmented Reality based testing on Wikipedia that reads as follows:

“Augmented reality-based testing (ARBT) is a test method that combines augmented reality and software testing to enhance testing by inserting an additional dimension into the testers’ fields of view. For example, a tester wearing a head-mounted display (HMD) or Augmented reality contact lenses that places images of both the physical world and registered virtual graphical objects over the user’s view of the world can detect virtual labels on areas of a system to clarify test operating instructions when performing tests on a complex system.”

Augmented Reality Testing

So now, how exactly can we use this in our testing domain? I’ve identified some key areas where we can use Augmented reality based systems and have grouped them under two main categories – Time consumption and Skill requirements:

Under time consumption, points to be considered include:

1) Test setup time

2) Duplicate error reporting

3) Test report creation

4) Training/Guidance/Support

5) Knowledge sharing

Under skill requirements, points to be considered include:

1) Keeping track of frequent hardware, software updates and requirements

2) Need for more knowledge / information sharing

3) Need for senior multi-disciplined testers due to complex systems

Now, categorizing these points is easy. How do we practically implement this?

Let us take a closer look at some of the points listed above. For setting up test machines, why not we consider developing an app, capable of Augmented Reality, that would query the main server(in real time) of the organization for fetching data about a system located anywhere in the organization. For this to work, a person just needs to hover his smart device in front of that system’s login screen.

The Augmented Reality system would then identify the test machine through a unique tracker, and throws back configuration details (after querying the server), such as installed OS, versions of different browser and plugins installed on that system. We can get to know all these details without touching or accessing that system.

Similarly, let’s look at the training part. To promote better knowledge sharing, why not record the session so that it can be re-used for future sessions. Herein I created virtual walk-throughs and demo’s of the application, for newcomers. For this to work, one just needs to hover his/her smart device in front the application; the AR system would scan and identify the application and voilà the session starts!

A screenshot or a pre-recorded walk-through would start automatically and would help the newcomer bootstrap without requiring a dedicated expert/mentor.

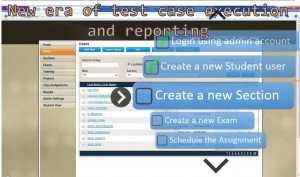

There are times, when we have as many as thousands of test cases to be executed. It becomes physically and mentally a strain to glance back and forth between the application and the test cases that need to be run. Despite all the due diligence, this kind of mundane activity is prone to manual errors. Wouldn’t it be super convenient if the information we need is overlaid in our line of vision?

Yes, I am talking about using Google Glass (or any such HMDs available for that matter).

Say for example, I have to perform a smoke test of an application and I am wearing a Google glass.

Through Augmented Reality I will be able to view all the previously executed test cases, my current test case, and all the other pending test cases by just waving my hand in thin air or by using voice commands. Moreover I can pass or fail a test case by just using my hand gestures – say for example a thumbs up or thumbs down gesture. And after executing the tests a test pass/fail analysis report can be instantly generated with the help of Augmented Reality systems.

Through Augmented Reality I will be able to view all the previously executed test cases, my current test case, and all the other pending test cases by just waving my hand in thin air or by using voice commands. Moreover I can pass or fail a test case by just using my hand gestures – say for example a thumbs up or thumbs down gesture. And after executing the tests a test pass/fail analysis report can be instantly generated with the help of Augmented Reality systems.

These above mentioned points are just a few examples on how this emerging technology can be used in software testing.

I have been attempting all these at my end to leverage existing and upcoming tools and technologies to benefit the testing discipline. Are you excited about trying these too? Why not start using apps such as Google Goggles and World Lens while doing localization testing.

We can utilize AR to work as our 3rd eye and establish a workforce ready to test in any environment readily. We can enhance our working capabilities, improve our productivity, thereby saving us some amount of time to focus on more challenging tasks requiring human attention & intervention. We still have a long way to go, but this is a very promising start and I can’t wait to see us (testers) using Augmented reality in the coming years to help us test better, faster and easier.

The focus of this blog is to look at how to tap AR’s resources to help testers enhance their efficiency and overall operational quality. I would be sharing my thoughts in subsequent posts on how to test AR based systems – but as of now, it’s all about bringing into play AR’s prowess in supplementing a tester’s endeavors.

References:

- https://en.wikipedia.org/wiki/Augmented_reality-based_testing

About the Author

Nandan Chhabra is a computer science graduate, currently working as Software Testing Engineer with QA InfoTech, having over 2 years of experience. He has always been fascinated about new technologies, gadgets, hacking, and computer games. This led to the interest in Augmented reality, inspired from the Sixth Sense Technology (dev. by Pranav Mistry). In his leisure time, Nandan loves to listen to progressive house music or to take a few casual snaps.

Nandan Chhabra is a computer science graduate, currently working as Software Testing Engineer with QA InfoTech, having over 2 years of experience. He has always been fascinated about new technologies, gadgets, hacking, and computer games. This led to the interest in Augmented reality, inspired from the Sixth Sense Technology (dev. by Pranav Mistry). In his leisure time, Nandan loves to listen to progressive house music or to take a few casual snaps.