This is the first post in a four-part series on Session based test Management. In this post Mark will share how to stratagise testing using BDD.

Behaviour driven development is often misunderstood. Testers sometimes mistake it as a methodology for testing, using Gherkin syntax to write test cases and over-relying on automated acceptance testing tools for test coverage. But BDD is not a testing methodology, it’s a team methodology for communication and collaboration to ensure that the team delivers what the business wants, and testing can have a role in that. Testers can take advantage of BDD activities to help with their testing and provide one piece of the testing strategy puzzle. So what other pieces are required to build a great testing strategy?

Modelling testing

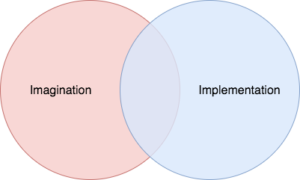

Consider this model that James Lyndsay created to explain the value of exploratory testing. We can use this as an abstract model for the goals of testing:

As testers our goal is to reveal and share information from both sides to help stakeholders make informed decisions. In James’ model, that information is divided between imagination and implementation. Or, what the business wants in a product and what the business currently has in a product. The intention of the model is that the more we learn about both the imagination and implementation circles, then the more they will overlap, resulting in a successfully delivered product.

Testers can exploit collaborative activities in BDD to help test the imagination side of the diagram through actively listening to business requirements, asking questions and sharing current knowledge of the product. This will help the team to create feature files which drive the development of new features. However, when testing the implementation we have to be careful with how we use feature files. Basing your test execution on feature files alone is dangerous as it may limit what we learn about implementation. Feature files are designed to help us with specific risks, such as:

- Has the team understood what the business wants to be delivered?

- Has the team delivered what the business wants?

- Have regression issues caused the product to drift away from what the business wants?

Therefore, test execution based on feature files alone will only help us confirm the overlap between imagination and implementation or what we expected to see in the product. Ignoring what we didn’t expect to see in our product can be a massive risk. Not just because we may potentially miss bugs that will severely impact our product, but because it becomes harder to judge whether we are on the right track when attempting to deliver what the business wants.

So we are left with a large amount of information in the implementation circle that we need to discover and we can do this by executing exploratory testing sessions. But how do we determine what test sessions we want to execute?

Working with risks

Specifically, the information we are looking to reveal is related to risk. Good testing will reveal what risks exist within the product and their likelihood to threaten the product and/or the business. Much like we cannot exhaustively test a product, we also cannot identify and learn about every risk. Some risks can be identified up front, whilst others might be identified during testing and noted for future exploration. After our feature files are created we can use them as a source to identify perceived risks to our product, that can then be converted into charters for exploratory test sessions which will be executed when the time is right.

When you start to identify risks depends on how your team works. The choice is yours in how and when you identify risks, but it’s good to have at least one formal session for each feature. You can initially record risks during the collaborative sessions around defining a feature’s behaviour. You could also take advantage of planning sessions to ask other members of the team to identify risks, maybe even gamify that process. Or you could set out explicit sessions after the feature has been defined and planned to either review risks by yourself or with your team.

Read Part 2 of Session Based Test Management Here

About The Author

Mark is a tester, teacher, mentor, coach and international speaker, presenting workshops and talks on technical testing techniques. He has worked on award-winning projects across a wide variety of technology sectors ranging from broadcast, digital, financial and public sector working with various web, mobile and desktop technologies.

Mark is a tester, teacher, mentor, coach and international speaker, presenting workshops and talks on technical testing techniques. He has worked on award-winning projects across a wide variety of technology sectors ranging from broadcast, digital, financial and public sector working with various web, mobile and desktop technologies.

Mark is part of the Hindsight Software team, sharing his expertise in technical testing and test automation and advocating for risk-based automation and automation in testing. He regularly blogs at mwtestconsultancy.co.uk and is also the co-founder of the Software Testing Clinic in London, a regular workshop for new and junior testers to receive free mentoring and lessons in software testing. Mark has a keen interest in various technologies, regularly developing new apps and devices for the Internet of things. You can get in touch with Mark on twitter: @2bittester